Technology giant Google is using AI and ML technologies in its Pixel 4’s Recorder app for recording the audios. Google detailed about the use of AI technology in this app for on-device ML processing. Earlier this Google released Recorder app for Pixel 4 devices. According to Google the technology behind this app is the AI and ML.

In the official post Google explained the rationale behind AI based Recorder app. Company explained the importance of speech as dominant information media, but unfortunately the current way of capturing and organizing are insufficient. Google hopes to make this simple and said “ideas and conversations even more easily accessible and searchable.”

Google is well known for organizing the information such as text, photos, and videos etc… in their search engine and making this information available to the users by searching through its search engine. But the speech is another very important medium of conveying information, now Google is working towards capturing the information hidden inside speech. There are huge amount of recorded information in the form of videos, sound and podcasts; this information can be captured using AI technologies.

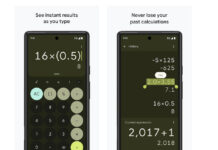

Google recorder app uses AI technologies and architecturally it is divided into three parts. The transcription part is responsible for automatic speech recognition, which is using a machine learning model. The “Faster voice typing” option can be downloaded for faster voice typing when working offline. It can transcribe character-by-character.

Google optimized the machine learning model for long running session without performance degradation. It is well optimized to run in long running sessions of hours or more. App also allows listening a word in the transcription.

Google did lot of research and came out best way to present the information in best visual format to the users. It displays information in bars and each bar is waveform of 50 milliseconds. Google uses colors to show dominant sound during that period.

Google uses CNNs to classify the sound for example dog barking or a musical instrument playing in easy to identifiable visualization. This makes application a lot useful for users. The latest advancement in machine learning and deep learning technology makes all these possible.

Google is using AI technology to offer three tags “represent the most memorable content” one user completes recording.

Most importantly this app is able to suggest these tags immediately after recording completes.